Three first author papers, one second author paper, and one second last author paper is the success of CHI 2018 for me. After a hard year, all 5 papers made it into CHI and the scope of each paper is vastly different.

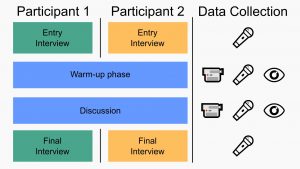

The first one is all about how to evaluate disruptiveness of mobile interactions in a social setting. In detail, our literature research showed that there is not quick an rapid method to understand how mobile interaction influences a face-to-face conversation. Thus, we developed a mix-method approach which allows to rapidly analyze a new interaction method in regards to disruptiveness. Moreover, the method also provides insights on how to further improve the interaction.

The first one is all about how to evaluate disruptiveness of mobile interactions in a social setting. In detail, our literature research showed that there is not quick an rapid method to understand how mobile interaction influences a face-to-face conversation. Thus, we developed a mix-method approach which allows to rapidly analyze a new interaction method in regards to disruptiveness. Moreover, the method also provides insights on how to further improve the interaction.

With the second paper, we studied how two parties would interact with large high-resolution displays. Here we let two participants play Pac-Many on a 4 by 1-meter screen (6x 50-inch 4K-screens). Pac-Many is a multiplayer game based on the original Pac-Man version from 1980 which is optimized to run on such a large screen. Further, we implemented the game in a way that players can use there own device to foster the ‘bring your own device approach’. In the paper, we show that players real-world movements in front of the screen differ when they play together as a team or play against each other in a one-on-one style game.

With the second paper, we studied how two parties would interact with large high-resolution displays. Here we let two participants play Pac-Many on a 4 by 1-meter screen (6x 50-inch 4K-screens). Pac-Many is a multiplayer game based on the original Pac-Man version from 1980 which is optimized to run on such a large screen. Further, we implemented the game in a way that players can use there own device to foster the ‘bring your own device approach’. In the paper, we show that players real-world movements in front of the screen differ when they play together as a team or play against each other in a one-on-one style game.

Extending my previous research, see Modeling Distant Pointing, we investigated how pointing is effected in VR in comparison to a real-world pointing scenario. We show that the mid-air pointing correction functions are different for VR and the real world. Resulting in two different models. In contrast to the first paper, we implemented the function and conducted a second study where we asked participants to point on targets with and without the correction function.

Extending my previous research, see Modeling Distant Pointing, we investigated how pointing is effected in VR in comparison to a real-world pointing scenario. We show that the mid-air pointing correction functions are different for VR and the real world. Resulting in two different models. In contrast to the first paper, we implemented the function and conducted a second study where we asked participants to point on targets with and without the correction function.

Here, again we extended our work on “Finger Placement and Hand Grasp During Smartphone Interaction” (CHI WIP’16). The first author of both papers is Huy Viet Le. In the new paper, we investigated the not only the finger placement in a static scenario, here we investigate the full finger range on today’s phones. We used an optical tracking system and asked participants to perform two different tasks: a) discover the full range of each finger on 4 differently sized phones and b) express the comfortable reachable area with each finger on all 4 phones.

Here, again we extended our work on “Finger Placement and Hand Grasp During Smartphone Interaction” (CHI WIP’16). The first author of both papers is Huy Viet Le. In the new paper, we investigated the not only the finger placement in a static scenario, here we investigate the full finger range on today’s phones. We used an optical tracking system and asked participants to perform two different tasks: a) discover the full range of each finger on 4 differently sized phones and b) express the comfortable reachable area with each finger on all 4 phones.

While over the last years, commodity smartphones and tablets got better in rejecting the palm when touching the screen, in this paper, we envision to use the palm as a new input technique. Therefore, we conducted an experiment in which we asked participants to perform a “PalmTouch” while in the first study we only recorded the capacitive image of the palm itself. Based on the recorded data we train a machine learning model which achieved a 99.58% accuracy in distinguishing between a palm and finger.

While over the last years, commodity smartphones and tablets got better in rejecting the palm when touching the screen, in this paper, we envision to use the palm as a new input technique. Therefore, we conducted an experiment in which we asked participants to perform a “PalmTouch” while in the first study we only recorded the capacitive image of the palm itself. Based on the recorded data we train a machine learning model which achieved a 99.58% accuracy in distinguishing between a palm and finger.